Somatosensory and Body Lab

|

|

|

Prof Elena Azañon PhD in Psychology

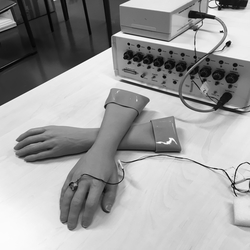

Welcome to the Somatosensory and Body lab! Our research focuses on how we experience touch. In particular, it focuses on the complex multisensory mechanisms that allow us to extract spatial information from touch using information about posture, and explains how this is used to construct representations of our body and to guide our actions. We combine a wide range of methods from psychology and cognitive neuroscience, including psychophysics, oculomotor, electro- and magnetoencephalography (EEG, MEG) in healthy adults, children, and patients with focal neurological dysfunction (e.g., neglect).

|

Research

A first line of research investigates how spatial coordinates of touch are transformed across spatial references frames at successive stages of tactile processing. Similar to the remapping of retinotopic coordinates of visual input, necessary to maintain visual stability across eye movements, touch also needs to be remapped as we move, i.e., from skin-based (e.g., a tickle on the right arm) to visual space (e.g., a tickle on the right arm which is currently raised above the head). This is necessary because the location of touch varies relative to the body and objects in the environment, as we move. The characterization of this process, including its timings and neural bases are central topics of my research (e.g., Azañón & Soto-Faraco, 2008, Curr Biol; Azañón et al., 2010, Eur J Neurosci; Azañón et al., 2010, Curr Biol).

- A second line of research investigates the role of visual and motoric information in the process and formation of tactile spatial perception in healthy individuals, both at early stages of development (Azañón et al., 2017, Child Development), and during adulthood (e.g., Azañón & Soto-Faraco, 2007, Exp Brain Res; Azañón et al., 2015, Curr Biol). Moreover, I have devoted part of my research connecting tactile spatial perception to higher levels of spatial cognition and body representation (Azañón et al., 2016, Cognition; Azañón et al., 2016, JEP:HPP), including the role that vision of the body has in the perception of our body image (in particular the effect of the thin body ideal, which is shaped and reinforced by many social influences).

- In parallel, we are working on the role that prior information have in the perception of tactile and visual events (see the newly funded project in this topic in the Research tab), and in the distinction between lower and higher level sensory processing in touch (Calzolari, Azañón, et al., 2017, PNAS).

Newly Funded Projects

Altering cutaneous sensations by autosuggestion

Autosuggestion is one form of self-suggestion and follows the idea that the constant, inner repetition of a thought can be converted into corresponding ideomotor, ideosensory, and ideoaffective states. Autosuggestion, therefore, assumes that one’s own mind has the power to convert a thought into reality as long as the thought is within the realm of possibility. This concept is certainly captivating, and nowadays used in many life and job coaching concepts. However, empirical evidence on how far and to what extent autosuggestion can indeed alter one’s own neurophysiological bodily states is so far scarce. Here, we use a combination of state-of-the-art neuroimaging technology (7 Tesla functional magnetic resonance imaging, fMRI) together with psychophysical modelling techniques and electrophysiological recordings (EEG), to answer the question of how the inner repetition of an idea influences tactile sensations at the body on a phenomenological, behavioural, and neurophysiological level.

In collaboration with Dr Esther Kuehn, Institut for Cognitive Neurology and Dementia Research, Magdeburg, Germany.

Project funded by the Bial Foundation Research Grants 2019.

- Dividing space: The use of categorical information in the remembering of tactile and visual locations

Humans naturally divide space into categories, forming boundaries and central values (Huttenlocher et al., 1991, Psychol Rev). These categories are believed to provide a fundamental source of information used to structure our perception of the world (Cheng et al., 2007, Psychol Bull). For instance, in remembering the location of a stimulus, inexact memories of the remembered location are averaged with prototypical values for a given category (e.g., a location central to the area where an object could be). The use of prior information increases perceptual accuracy, though at the expense of introducing systematic bias (Huttenlocher et al., 2004, Cognition). Revealing the internal structure of these biases can become a powerful tool to understand how humans categorize space, and the impact that prior information has on perception. In an ongoing research, we have developed novel methods to generate complete maps of the internal structure of localization biases from memory, both for visual events and locations of tactile events. In the present project, we will provide a deeper understanding of the nature and role of prototypical information for spatial localization in vision, touch and proprioception.

In collaboration with Prof Matthew Longo and Dr RaffaeleTucciarelli, Birkbeck, University London, United Kingdom

Project funded by the Experimental Psychology Society (EPS) Small Grants 2019.